Sophia Pi (π)

ML Student Researcher. Stick Figure Enthusiast. Serial Sidequester. D1 Yapper.

Hi! I'm a student researcher at Northwestern excited about learning theory, AI4Science, networks, economic perspectives in ML, and anything that pops up on my Twitter feed.

On the theoretical side, my research focuses on the theoretical foundations of deep learning and graph algorithms; on the applied side, I've worked on agentic model routing for SWE as well as molecular generation for drug discovery.

I'm passionate about both excavating the abstract mathematical laws that govern how individuals, machines, and societies learn, behave, and evolve, and building concrete systems that improve the lives of real people.

Recent News

Our preprint, "On Flow Matching KL Divergence," is now available on arXiv.

I started a summer research internship at Carnegie Mellon's Language Technologies Institute, working under Professor Graham Neubig. If you're in Pittsburgh this summer I'd love to connect!

I presented my research proposal, "Exploring Topological Properties of Artificial Neural Networks," at AAAI 2025.

I presented my RIPS project, "Highlighting Limitations of Generative AI in Early Drug Discovery" at NCUWM 2025.

I co-presented my RIPS project, "Highlighting Limitations of Generative AI in Early Drug Discovery" at JMM 2025.

I was awarded the Peter and Adrienne Barris Outstanding Peer Mentor Award by the NU CS Department for my work as a PM for CS 212 (Mathematical Foundations of Computer Science).

Our paper, "On Statistical Rates and Provably Efficient Criteria of Latent Diffusion Transformers (DiTs)," was accepted to NeurIPS 2024.

I started at the RIPS (Research in Industrial Projects for Students) program at IPAM (Institute for Pure and Applied Mathematics), working with precision medicine company Relay Therapeutics.

Alpime Health, a healthtech startup I co-founded developing AI-integrated OCR systems to accelerate digital health systems in West Africa, won 1st place in the Life Sciences and Medical Innovations Track at VentureCat 2024.

My team's paper, "Feed-Forward Assisted Transformers for Time Efficient Fine-Tuning" won 3rd place at U Toronto's annual ProjectX, the largest undergraduate ML competition in North America.

I presented my summer research project, "Tighter Convergence Guarantees for the Label Propagation Algorithm on the 2-Community Stochastic Block Model", at the NU CS Undergraduate Research Showcase.

My friend Mithra Karamchedu and I went on a sidequest about hamming distances and ended up contributing two sequences (A365618 and A367055) to the OEIS (Online Encyclopedia for Integer Sequences).

I received the R&B Feldmann Fellowship to conduct summer research on random graphs, advised by Professor Miklos Racz.

Selected Projects

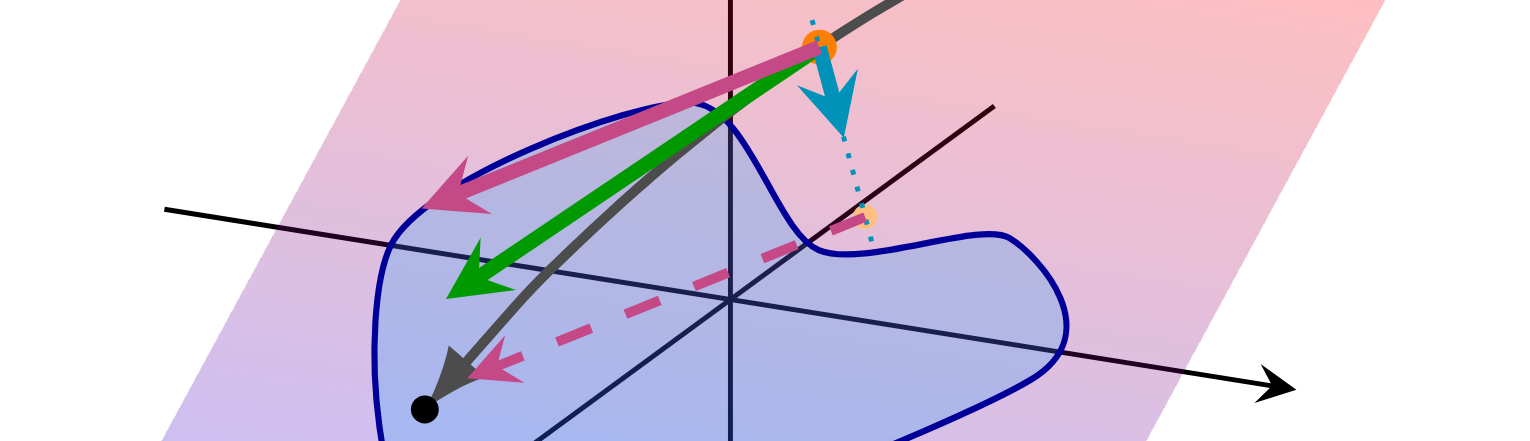

Learning Manifold Data With Flow Matching

Escaping the Curse of Dimensionality via the Manifold Hypothesis Part II: Electric Boogaloo

Statistical Rates of Diffusion Transformers (NeurIPS '24)

My cold plunge into statistical learning theory: escaping the curse of dimensionality

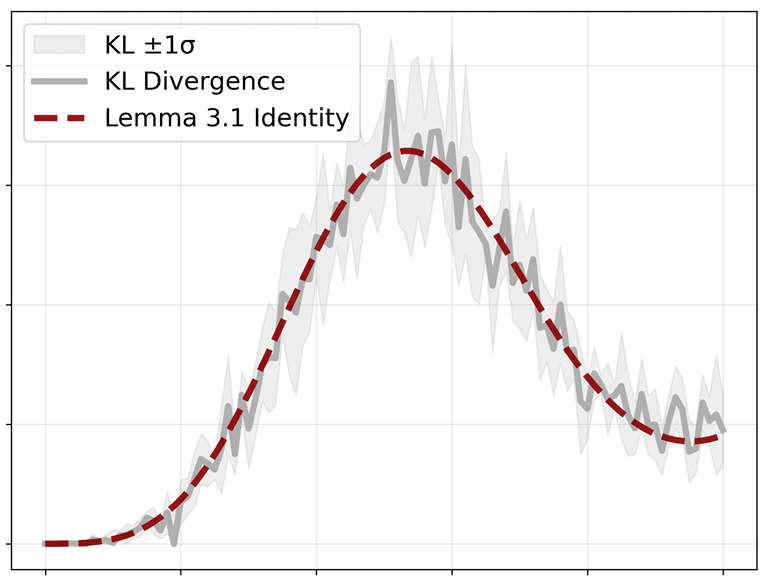

On Flow Matching KL Divergence

How well is my flow matching model actually learning? A numerical interlude

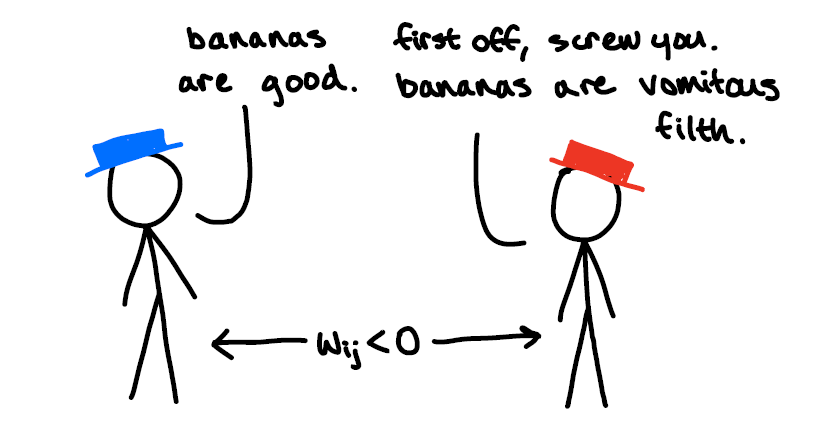

Information, Repelling, and Balance in Antagonistic Networks

The Hitchhiker's Guide to Winning Twitter Wars

Generative AI for Drug Discovery (RIPS - JMM Oral '25, NCUWM Oral '25)

All SMILES (or perhaps not?)

Label Propagation Algorithms on Balanced Stochastic Block Models

When does copying your neighbor's opinions fail?

Exploring Topological Properties of ANNs (AAAI UC '25)

Ratatouille x Waymo Crossover Episode

Feed-Forward Assisted Transformers (ProjectX - 3rd Place)

Why fine-tune the whole model when you can just train a tiny network on top?