Learning Manifold Data With Flow Matching

I'm grateful to my co-authors Jerry Yao-Chieh Hu, Mingcheng Lu, Maojiang Su, Weimin Wu, Minshou Chen, and Prof. Han Liu for their collaboration and insights throughout this project. I really enjoyed the geometric flavor of this work. This project forced me to look beyond the algebraic, statistical, and calculus-based symbolic approaches I was comfortable with and develop a much deeper understanding of the geometric picture driving the results. The velocity decomposition we proved came from mapping out the geometry of conditional flows and realizing what the right object to decompose was—not from manipulating equations until something worked.

Abstract

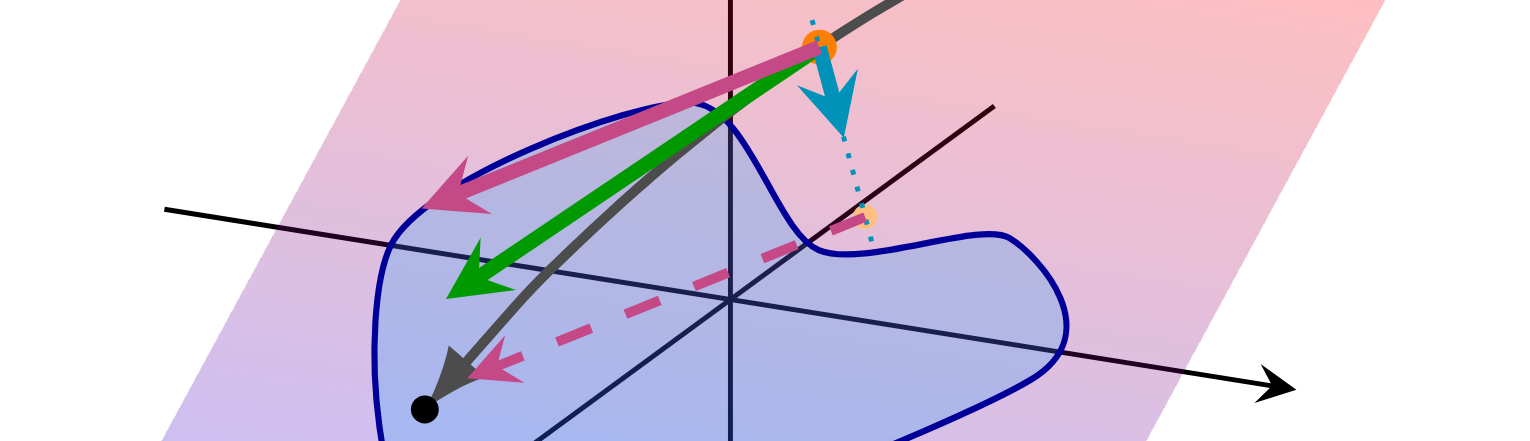

We study flow-matching transformers when data lie on low-dimensional manifolds. Following our NeurIPS work on diffusion transformers, we ask whether flow matching can exploit manifold structure through an analogous decomposition. We prove a velocity decomposition theorem: under a linear latent subspace assumption, the optimal velocity splits into tangent and orthogonal components that can be learned separately. This yields risk decoupling, identifiability of on-manifold dynamics, and built-in stability.

The geometric insight motivated a two-headed transformer architecture and enabled us to establish intrinsic-dimension-optimal minimax rates for velocity approximation, velocity estimation, and distribution estimation. This shows how flow-matching transformers escape the curse of dimensionality by utilizing intrinsic data structure.